A Practical Route From Photos To Moving Content

A still image can communicate mood, identity, and information in a single frame, but in crowded digital environments it often needs one more dimension to hold attention. Many creators feel that pressure and then run into a predictable wall: traditional video production can be too slow, while some AI tools feel either too abstract or too complex for daily use. That is what makes Image to Video AI worth examining closely, because the platform approaches motion in a grounded way that begins with an uploaded image and a prompt rather than an intimidating production workflow.

That difference matters for more than convenience. It changes who can participate. A marketer with a product image, a teacher with an archival photo, an artist with a concept frame, or a founder with a campaign visual can all imagine a use case immediately if the workflow feels manageable. When a tool lowers the activation energy, more ideas actually get tested.

The category itself is maturing. Image-to-video is no longer just a novelty demonstration. It is becoming a creative utility. The real question is not whether motion can be generated, but whether the process is clear enough and flexible enough to produce something useful without exhausting the user before the second attempt.

Eight Platforms Worth Comparing Before You Commit

Anyone exploring this space should resist the temptation to treat all platforms as interchangeable. The differences are meaningful, especially once you think about whether you are starting from a still image, a brand asset, an artistic concept, or a recurring content workflow.

| Rank | Platform | Most Natural Starting Point | Strength In Practice | Important Limitation |

| 1 | Image2Video | Existing still image assets | Direct image-to-video path with visible controls | Quality can improve with prompt iteration |

| 2 | Runway | Broad creative production needs | Strong all-around environment for visual work | More tooling than simple projects may require |

| 3 | Kling | Ambitious motion quality goals | Often chosen for cinematic-looking movement | Can demand more patient testing |

| 4 | Pika | Social and creator experiments | Quick, accessible generation feel | Not every output suits formal brand tone |

| 5 | Luma | Conceptual scenes and mood | Distinctive atmosphere in generated motion | Results may vary widely by prompt style |

| 6 | Kaiber | Stylized storytelling | Good for expressive and artistic visuals | Less focused on straightforward realism |

| 7 | PixVerse | Fast exploration cycles | Helpful for testing multiple motion directions | Some users may want more structured control |

| 8 | Hailuo | Side-by-side experimentation | Useful for comparing alternative generation character | Lower familiarity can raise the learning curve |

Image2Video earns the top spot here because clarity has become a competitive advantage. A tool that makes the first useful output easier to reach can outperform a more expansive platform for many everyday scenarios. That is especially true for people who are not trying to build a long post-production pipeline, but simply want to animate existing images in a controlled way.

What Matters More Than Hype In This Category

The deeper I look at image-to-video tools, the more I think the strongest test is not the most spectacular demo. It is whether a new user can understand the sequence, make one sensible generation choice, and get a result that feels close enough to improve with iteration.

That is why workflow transparency matters. The homepage of Image2Video presents the platform as a place to transform static photos into dynamic videos and outlines a simple process. The dedicated generator page then supports that promise by showing the actual creation controls. There is a practical honesty in that structure.

Reliable Expectations Build Better Creative Habits

A lot of frustration with AI tools comes from unclear expectations rather than poor capability. When people do not know the accepted input formats, typical wait time, or how much control they really have, they cannot judge the output fairly.

In contrast, a tool becomes easier to trust when its pages clearly connect the starting asset, the prompt, the generation step, and the resulting file. This is one reason Image2Video feels easier to understand than many platforms that speak in broad creative language but reveal less about the operational flow.

Why Image2Video Feels Grounded In Real Use

The product pages give the impression of a tool designed for action rather than abstraction. On the homepage, the platform describes a sequence of uploading an image, entering a prompt, generating the video, and then viewing or downloading the result. On the photo-to-video page, that promise becomes concrete because the user sees the generator setup directly.

This matters because usability often depends on whether a platform maps well to the way people already think. Most users do not begin by imagining a model architecture or a complex editing chain. They begin by asking, “I have this image. Can I make it move in a way that fits my idea?” The platform’s flow aligns closely with that question.

The Official Workflow Uses Three Clear Moves

Based on the pages I reviewed, the core usage path is:

- Upload a supported image.

- Enter a prompt that describes the desired movement or visual transformation.

- Generate the video, wait for processing, then preview and export the result.

That is an effective structure because it stays close to the user’s mental model. The page also indicates support for common image formats such as JPG, JPEG, PNG, and WebP, while the homepage FAQ identifies MP4 as the video output format. These are small but important signals of practical readiness.

Visible Settings Help Users Steer The Result

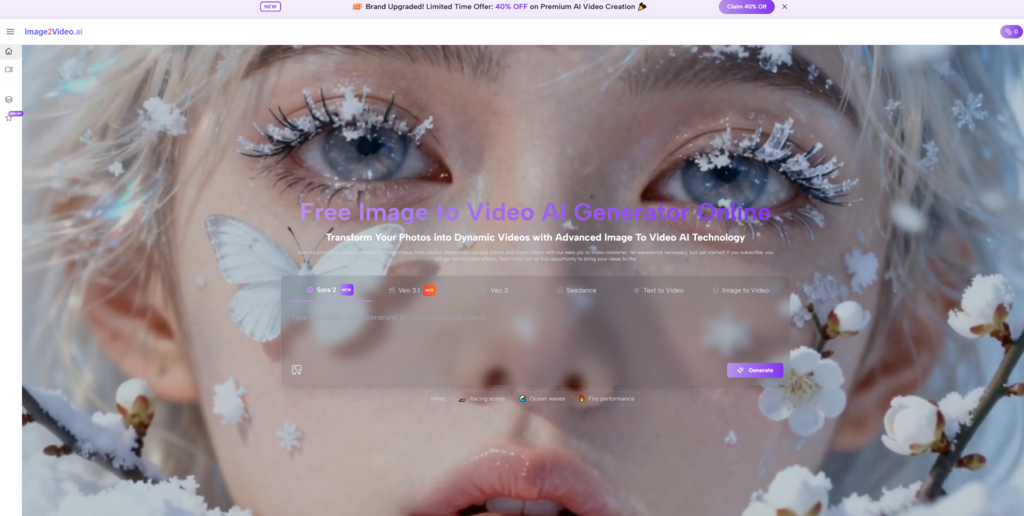

The generator page does more than invite a one-click experiment. It also shows a useful range of settings that can shape the output. At the time of review, the page displayed:

- Seedance 1.0 Lite as the visible model

- A prompt box with a 2000-character limit

- Multiple aspect ratio options

- A 5-second duration indicator

- 480p, 720p, and 1080p resolutions

- 16 FPS and 24 FPS frame rate choices

- Seed and public visibility controls

- A visible 12-credit requirement for the task

This setup suggests a thoughtful balance. The tool is approachable for beginners, yet it still gives more deliberate users enough control to align the output with a social post, a portrait animation, or a landscape-oriented web asset.

How Different Users Might Read The Same Market

A teacher looking to animate historical photos will evaluate these platforms differently from an e-commerce manager, and both will think differently from an artist testing mood-driven work. That is why the category makes more sense when described by use case.

For someone who already has a visual asset and wants a quick route to motion, Image2Video is a natural first candidate. The platform appears optimized for exactly that. For users who want broader video generation options in a larger ecosystem, Runway may be attractive. For motion with cinematic ambition, Kling often enters the conversation. For expressive social content, Pika and PixVerse feel relevant. For stylized, artistic, or music-related storytelling, Luma and Kaiber may be better fits. Hailuo is often useful when a creator wants to compare interpretation style across tools.

Still, it is important to avoid overpromising. Image-to-video generation remains a creative partnership between the source image, the prompt, and the model’s interpretation. In my observation, success usually comes from realistic expectations: treat the first generation as a draft with potential, not a guaranteed final cut. That mindset tends to produce better results and less disappointment.

When A Small Trial Becomes A Larger Opportunity

The pricing page adds another practical layer to the platform story. It lists a free plan with 10 credits, up to 1 video, and up to 5 images, which is a sensible entry point for cautious users. The paid plans scale credits much more significantly, suggesting a path for people who want to move from curiosity to repeated use.

That structure supports a smart evaluation method. Start with one strong source image. Use a prompt that is specific but not overloaded. Generate one short clip. Then decide whether the motion adds enough value to justify another round. This is how creators and teams learn what the tool is actually good at, rather than what marketing language implies it should be good at.

Seen through that lens, Photo to Video is not just a feature page. It is a good test environment for a broader question: can a static asset gain emotional movement, narrative energy, or commercial impact without requiring a full video production process?

That is the real promise of this category. Not replacing every editor or every creative workflow, but giving people a faster route from visual intent to moving content. Image2Video appears compelling because its pages, controls, and flow all support that promise in a coherent way. For users who want to understand the potential of image-to-video generation through a practical first experience, that coherence is a meaningful strength.