Anchoring the Protagonist: Strategies for Visual Continuity in AI Media

|

Getting your Trinity Audio player ready...

|

In the early stages of the generative media boom, the novelty of creating a single high-fidelity image was enough to sustain interest. Marketers and creators were satisfied with one-off hero shots for blog posts or social tiles. However, as the industry moves toward narrative-driven content—storyboards, short films, and multi-asset brand campaigns—the most significant technical hurdle isn’t quality; it is consistency.

For a creator, there is nothing more frustrating than generating a perfect character in frame one, only to have their facial structure shift or their wardrobe change color by frame five. Maintaining the “identity” of a subject or a specific scene environment across multiple generations is what separates a professional asset pipeline from a collection of random experiments. This challenge is especially visible when working with high-performance models like Nano Banana Pro, where the speed of output can sometimes outpace the user’s ability to anchor visual traits.

The Geometry of Character Persistence

The underlying architecture of most diffusion models is probabilistic. When you ask a system like Banana AI to render a person, it isn’t “thinking” about a specific individual with a history and a fixed skeletal structure. It is calculating the most likely placement of pixels based on a text prompt. This inherent randomness is a feature when you want variety, but it is a bug when you require a protagonist to look the same across twenty different camera angles.

Teams that successfully maintain character stability usually start by defining a “visual anchor.” This is not just a descriptive prompt but a set of fixed parameters. In systems like Banana Pro, this often begins with the creation of a master reference image. Instead of relying on a text prompt for every new shot, the operator uses an initial successful generation as a structural guide.

One limitation that practitioners frequently encounter is “feature drift.” Even with a strong reference, small details like the shape of a collar or the specific texture of hair tend to morph over several iterations. While we have made massive strides in semantic understanding, the AI does not yet possess a “3D memory” of the character. It sees the character as a flat pattern of colors and shapes that it tries to replicate, which means manual oversight remains a non-negotiable part of the process.

Leveraging the Image-to-Image Pipeline

The shift from text-to-image to image-to-image (Img2Img) is perhaps the most critical tactical move for ensuring consistency. By using the AI Image Editor as a grounding tool, creators can feed a previously generated character back into the model to influence the next output.

This workflow usually involves a “character sheet” approach. An operator generates the subject in several neutral poses—front, profile, and three-quarters view. These images serve as the “ground truth.” When a new scene is required, the operator doesn’t start from a blank canvas. They use one of these neutral poses as a base layer, setting a low “denoising” or “influence” strength. This allows the model to change the background and the lighting while keeping the core geometry of the subject intact.

Using the Nano Banana model, this process is optimized for speed, which allows for rapid prototyping. However, a common mistake is over-relying on the model’s ability to guess the lighting. If you place a character generated in daylight into a neon-lit cyberpunk scene via Img2Img, the skin tones often become muddy as the model struggles to reconcile the original color data with the new prompt requirements.

The Role of the Canvas Workflow in Production

Traditional AI generation can feel like a “black box” where you send a prompt and hope for the best. For professional teams, this lack of spatial control is a deal-breaker. The Canvas Workflow found in the Banana Pro environment addresses this by allowing creators to move, scale, and mask specific elements of an image.

If you have a stable character but the background is inconsistent with the previous shot, the Canvas allows you to “outpaint” or extend the scene without touching the protagonist. This spatial awareness is crucial for maintaining scene identity. For instance, if a character is standing in a specific kitchen, the placement of the stove and the refrigerator must remain static across different shots.

The Challenge of Environmental Coherence

It is arguably harder to keep a room consistent than a person. Human eyes are very sensitive to faces, so we notice when a person changes, but we also subconsciously notice when the architecture of a room shifts. This “hallucinated renovation” is a frequent byproduct of Nano Banana Pro when the prompt isn’t sufficiently anchored to spatial markers.

To combat this, teams often use “L-shaped” or “U-shaped” prompting. They define the three most important landmarks in a room—say, a blue vase, a circular window, and a wooden table—and ensure those three keywords appear in every prompt related to that scene. By giving the AI three fixed points to calculate around, the probability of the rest of the room remaining stable increases significantly.

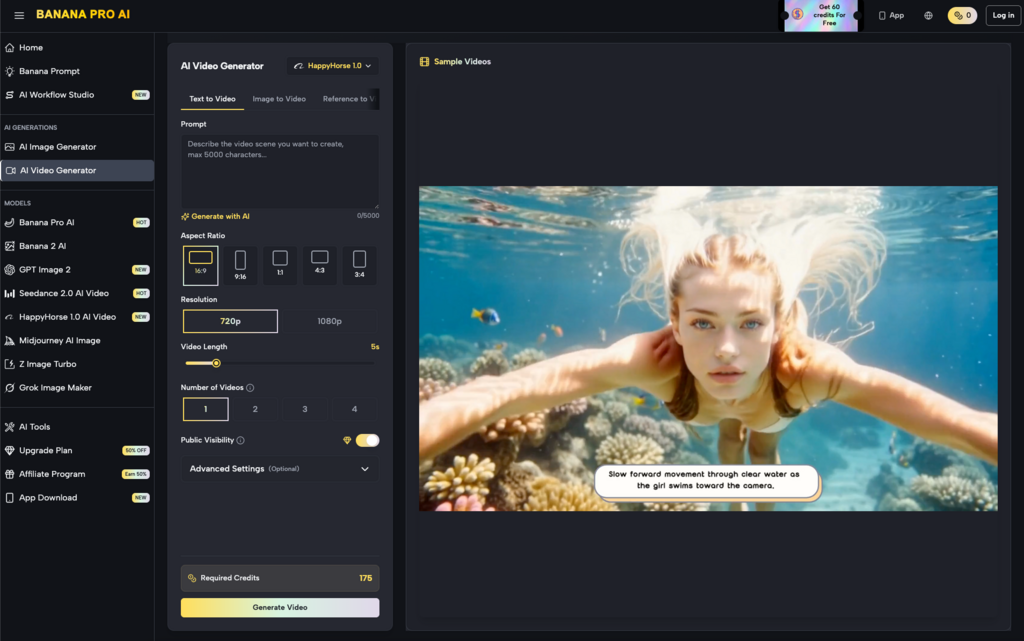

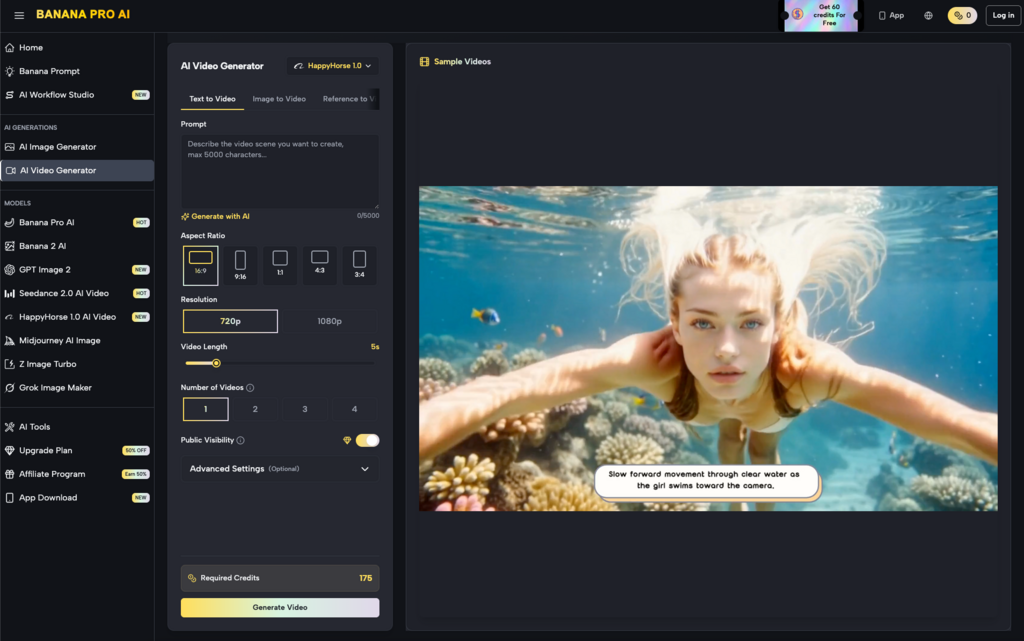

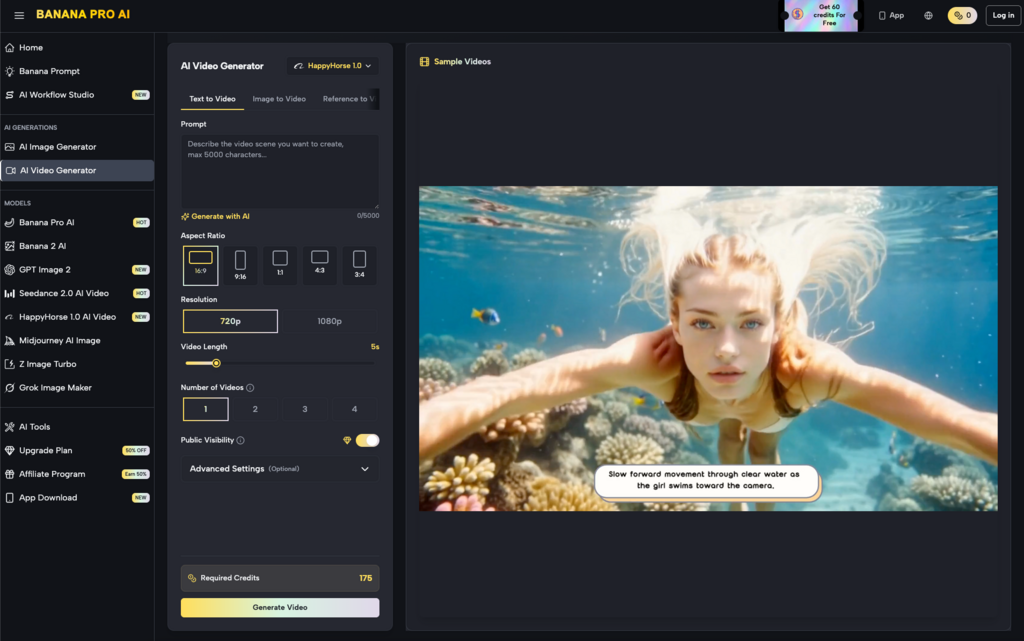

Moving Into Motion: Consistency in Video

The difficulty of consistency triples when you move from static images to video. When using tools like Banana AI for video generation, you aren’t just dealing with character persistence; you are dealing with temporal coherence. This refers to the smooth transition of pixels from one frame to the next.

Currently, Seedance 2.0 and similar video models represent the cutting edge of this transition. The strategy here is often to generate a high-quality “Keyframe A” and then use the video engine to predict the motion toward a “Keyframe B.”

However, we must be realistic about the current state of the tech: long-form temporal coherence is still an unsolved problem in the broader industry. While short clips (3 to 5 seconds) can maintain near-perfect consistency, anything longer often suffers from “fluidity,” where the background seems to melt or the character’s clothing shifts patterns. For creators, the workaround is usually to generate short, consistent clips and stitch them together in post-production using traditional editing software, rather than trying to generate a one-minute continuous take.

Model Selection: Nano Banana Pro vs. Banana 2

Choosing the right tool for the specific consistency task is an often-overlooked skill. The Nano Banana Pro model is designed for high-speed iteration and creative brainstorming. It is excellent for finding a visual “vibe” or a character design.

Conversely, models like Banana 2 or Seedream 5.0 are typically better suited for the “final pass” where higher detail and stricter adherence to the prompt are required. If a creator stays within the Nano Banana ecosystem for the entire pipeline, they might find that the “fast” nature of the model leads to more creative deviations than they want during the production phase.

The “Seed” Management Strategy

Every generation has a “seed” number—a long string of digits that serves as the starting point for the random noise the AI uses to build an image. If you find a character you love, locking that seed number is the first step toward consistency.

While the same seed with a different prompt will not produce the exact same image, it will maintain the same “underlying noise structure.” This means the distribution of light and the basic composition will feel familiar to the previous generation. It is a technical shortcut that, when combined with the AI Image Editor, provides a much more stable foundation for building out a full narrative.

Practical Limitations and the “Human-in-the-Loop” Necessity

Despite the advancements in Nano Banana and the broader generative suite, we have to acknowledge that “perfect” consistency is not a “one-click” reality yet. There is an expectation-reality gap that often hits new users. They expect the AI to understand that “Character X” is the same person across ten prompts, but the AI lacks a persistent memory of its previous work unless the human operator provides it via references, seeds, and masks.

A second moment of uncertainty arises with complex lighting. If your character needs to move from a bright outdoor setting into a dark cave, the AI often struggles to keep the facial features recognizable under the heavy shadows. The model prioritizes the “look” of a dark cave over the “look” of your specific character. In these cases, manual color correction or localized in-painting is the only way to ensure the protagonist remains identifiable.

Structuring a Repeatable Asset Pipeline

For agencies and content teams, the goal is to create a repeatable workflow. This usually looks like the following:

- Concepting: Use Nano Banana Pro to quickly iterate on 20-30 character designs until one “sticks.”

- Standardization: Take the winning design and generate a 360-degree character sheet.

- Grounding: Import these references into the Canvas Workflow to set the environmental “rules.”

- Generation: Use the AI Image Editor to produce specific story beats, using the character sheet as a constant Img2Img reference.

- Motion: Transition to Banana AI for video elements, focusing on short, high-coherence bursts rather than long sequences.

This structured approach moves away from the “lottery” style of prompting and toward a legitimate production methodology. By treating the AI as a highly advanced digital puppet rather than a magical artist, teams can finally bridge the gap between interesting “AI art” and functional, narrative-driven media.

Consistency isn’t just about pixels; it’s about the trust the viewer has in the world you’ve built. If the world or the characters are constantly shifting, the viewer disengages. By mastering the anchoring techniques within the Banana Pro ecosystem, creators can ensure that their protagonists remain as steady as the stories they are meant to tell.